AI has transformed content creation, enabling the production of text, images, video, and music with unprecedented ease and speed. However, this remarkable progress also introduces significant ethical and transparency challenges in using AI-generated content.

This situation threatens the intellectual property rights of those who develop and train AI systems and the overall value and integrity of the content produced. To combat these problems, measures must be implemented to ensure that AI-generated works are used responsibly and that their creators are duly recognized.

The concept of AI watermarking, a mechanism designed to embed a unique and identifiable mark within AI-generated content, has been introduced. This helps make the origin of content explicit and thus makes it straightforward to users as to what was created by whom (in this case, AI vs. human).

In this article, we will explore the importance of AI watermarking and the various methods available and discuss the challenges of implementing these protections.

In addition, we will examine the implications of the AI Act, standards for AI-generated content, Google’s position on AI-generated content recognition, and its efforts in AI watermarking. This comprehensive overview highlights the importance of ethical practices in creating AI content and the steps taken to ensure its responsible use.

In this blog, we’ll cover:

- What AI watermarking is and why it matters

- Introduce the AI Act and its relevance to AI watermarking

- Nine types of AI watermarking methods

- Standards for AI-Generated Content

- Google’s Approach to Watermarking AI

- Challenges in AI watermarking

- Conclusion

What is AI Watermarking?

AI watermarking is a method used to protect and identify AI-generated images and written content like blog posts. In simple terms, it involves embedding sophisticated watermarks and secret patterns into content created by AI tools.

This digital watermark isn’t just any random marker — it’s a specific identifier unique to the creator or model developer. It can take multiple forms (visible or invisible), depending on the needs of the content and its intended use.

The way AI watermarking works is quite fascinating. When AI produces content, a watermark — a series of data points, patterns, or codes — is integrated into the content.

These subtle patterns don’t alter the quality or appearance of the content for the end user. However, specific tools or techniques can detect and read this embedded data.

Suppose AI-generated content is used without permission. In that case, the watermark traces the content back to its source, proving its origin and helping enforce intellectual property rights.

This mechanism is crucial where content can be easily copied and distributed, ensuring creators and model developers maintain control and recognition for their work.

Why AI Watermarking Matters

As generative AI models evolve and become more capable of creating diverse content, the need to safeguard these creations becomes critical.

Without protective measures, AI-generated work is susceptible to various risks, the most concerning being theft and unauthorized use.

In an age where tools like Wordable help content teams publish and promote more digital content than ever, the absence of a watermark means that creators and AI developers may lose control over their work. This leads to potential revenue loss and dilutes the credit and recognition that creators rightfully deserve.

Moreover, unwatermarked AI work can be misused or misrepresented. As a result, it could harm the reputation of the creator of the AI system.

That’s why AI watermarking serves as a crucial tool to uphold the rights of creators and model developers and fosters a more responsible and ethical use of AI content.

Introduce the AI Act and its relevance to AI watermarking

The European Parliament’s recent approval of the AI Act marks a significant milestone in the regulation of artificial intelligence technologies within the EU. This groundbreaking legislation aims to ensure that AI systems, including generative AI models such as ChatGPT, adhere to strict transparency requirements and comply with EU copyright law.

Among the key obligations outlined in the law is the need for AI-generated content to be clearly identifiable as such. This is particularly important when the content is intended to inform the public about matters of public interest, where it must be explicitly labeled as artificially generated. This directive includes not only text, but also audio and video content, highlighting the law’s comprehensive approach to AI regulation.

The law’s emphasis on the identifiability of AI-generated content underscores the growing importance of “watermarking for AI,” a practice that ensures that AI-created digital content can be distinguished from human-generated content. As the AI Act takes effect, watermarking for AI will play a key role in maintaining transparency and trust in the digital landscape by ensuring that consumers can easily recognize AI-generated content.

AI Watermarking Methods

AI watermarking can be categorized into visible and invisible (or hidden) watermarks.

Visible watermarks

These are overt markers that are easily perceptible to the viewer. They’re often used in images and videos to denote clear ownership or origin.

AI-powered visible watermarks come in various forms, each tailored to specific needs and applications.

Text-based watermarks

Here, the AI algorithm creates and embeds textual information like names, logos, or copyright notices directly onto the content. These can be customized in font, size, color, and placement to ensure visibility without detracting from the content’s aesthetics.

Graphic watermarks

Graphic watermarks embed symbols, logos, or other graphic elements. AI can adapt the watermark’s opacity and blending to match the content. The goal is to ensure it’s noticeable but not obtrusive.

This type of AI watermark is particularly popular in visual media, such as photographs and videos.

Pattern-based watermarks

In pattern-based watermarks, AI creates a unique and secret pattern or a series of shapes integrated into the content. These patterns can be geometric shapes, abstract designs, or even QR codes. AI helps in seamlessly integrating these subtle patterns into the content, sometimes even using color-matching techniques to maintain the overall look and feel.

Dynamic watermarks

These are particularly useful in video content, where the watermark changes position, size, or appearance throughout the video to prevent removal.

AI algorithms can analyze the video content in real time and decide the most effective placement and form for the watermark. Like graphic watermarks, the main goal is to remain effective and minimally intrusive throughout the video.

Invisible watermarks

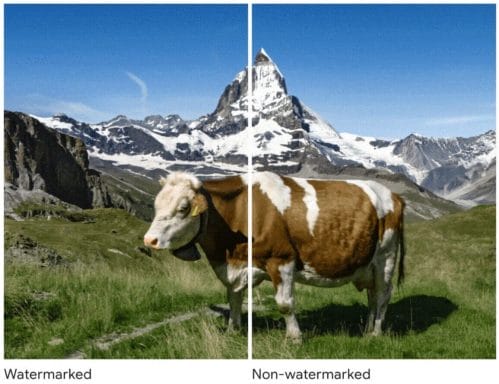

Unlike their visible counterparts, invisible watermarks are hidden within the content. They’re undetectable to the naked eye. These are often used when the visual integrity of the content is paramount.

Digital watermarks

Digital watermarks are ideal for images or videos.

Why? They subtly modify pixel values in images or video frames and are undetectable to the human eye. The only way to spot them is via specialized software.

That said, this type of AI watermarking is popular in visual media to protect copyright without impacting the visual experience.

For instance, Google DeepMind developed a watermarking tool for AI-generated images, which subtly modifies certain pixels in an image to create a hidden pattern.

The naked eye can’t tell if an image is watermarked. Another neural network can then detect this pattern, confirming whether the image has a watermark.

This method guarantees that the watermark can still be detected even after the image is edited or altered in some way, such as being screenshotted or resized.

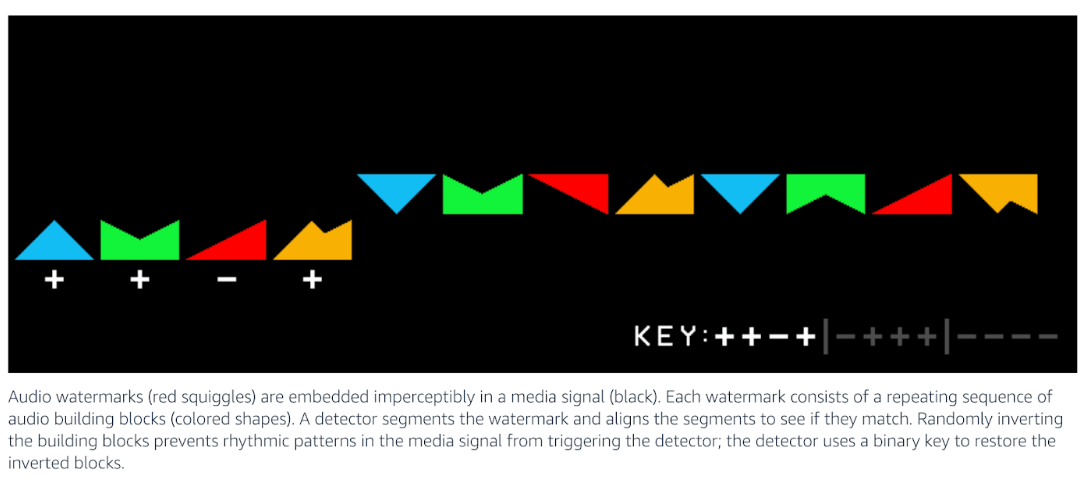

Audio watermarking

In audio watermarking, information is embedded in an audio file at frequencies not detectable by the human ear. This method is preferred in the music industry to track and manage copyright in digital music distribution.

Amazon, for example, uses an audio watermarking algorithm to embed watermarks in the audio signal of their Alexa ads.

Text watermarking

Text watermarking can fall into both visible and invisible categories. In the invisible method, the AI subtly alters characters or spaces in a document. These alterations are indiscernible during casual reading but can be identified to prove authorship or origin.

Data watermarking

In data watermarking, AI algorithms embed unique identifiers within a dataset. This framework is particularly important in machine learning, where datasets are assets.

The watermark doesn’t significantly change the dataset’s statistical properties, ensuring it remains useful for its intended purpose while embedding proof of ownership.

Cryptographic watermarks

Cryptographic methods involve encoding a digital signature or hash into the content. It’s one of the more secure forms of watermarking, as the embedded information is encrypted and can only be decoded or verified with the correct key.

In other words, it adds an extra layer of security and authentication to the content. Implementing a DMARC policy further strengthens email security, safeguarding against unauthorized access and ensuring secure communication channels.

Model watermarking

Model watermarking embeds a unique identifier or pattern into a machine-learning model. This watermark isn’t directly visible in the model’s output or behavior under normal operation. As a result, it’s a covert method to assert ownership or authorship of the model.

The watermark in model watermarking is often embedded during the model’s training process– achieved by introducing specific patterns or data into the training dataset, which the model then learns and integrates into its internal parameters.

The embedded watermark doesn’t significantly alter the model’s performance but can be detected by applying specific tests or inputs. This allows the original creator to claim ownership or detect unauthorized copies of the model.

Standards for AI-Generated Content

Given the relevance of the need to know clearly that a piece of content was or was not generated with AI, the International Press Telecommunications Council (IPTC) has taken a significant step forward by publishing a Photo Metadata User Guide. This guide provides comprehensive instructions on utilizing embedded metadata to mark content as “synthetic media,” explicitly indicating its creation by generative AI systems.

Further advancing the cause for transparency and authenticity in digital media, the Coalition for Content Provenance and Authenticity (C2PA) is at the forefront of developing technical standards. Through its C2PA Specification, the coalition aims to establish a robust framework for certifying media content’s source and history (or provenance). This initiative is crucial for ensuring the integrity of digital media and fostering trust in the digital ecosystem.

Google’s Approach to Watermarking AI

Google’s proactive measures to ensure the transparency and authenticity of AI-generated content through watermarking and metadata are commendable steps towards responsible AI usage. Sundar Pichai’s emphasis on embedding these features from the beginning highlights Google’s commitment to content authenticity. By advocating for the IPTC Digital Source Type property, Google aims to create a more transparent digital environment, although the implementation in Google Images search results is still a work in progress.

Despite these efforts, challenges remain in accurately recognizing AI-generated content and assessing its quality in terms of Expertise, Authoritativeness, Trustworthiness, and Experience (E-E-A-T). Google’s algorithms, while sophisticated, are not infallible and can sometimes struggle to differentiate between high-quality content and poorly crafted AI-generated material. An illustrative example provided by Andrea Volpini underscores this point vividly. He points out a glaring error in which the AI mistakenly suggested that Italy still has a dual currency, when in reality it switched to the euro some 25 years ago, an amusing but troubling demonstration of the potential of AI to spread inaccurate information.

When we think it is easy for Google with AI to evaluate E-E-A-T and badly crafted AI generated content and it really isn’t.

To be noted that Italy transitioned to Euro, 25 years ago!! This is hilarious but also shows dramatically the inability to prevent bad content to surface. https://t.co/QxIi6hHRct pic.twitter.com/0fOiD8KZp2— Andrea Volpini (@cyberandy) March 14, 2024

This example not only showcases the limitations of AI in evaluating E-E-A-T but also underscores the importance of rigorous article fact-checking.

I believe it's simply very hard to validate content at Google's scale. Here is a quick test of fact-checking that works. pic.twitter.com/g5ErxNpX7w

— Andrea Volpini (@cyberandy) March 14, 2024

It ensures that information disseminated to the public is accurate, reliable, and trustworthy. Google’s initiatives, while forward-thinking, must be complemented by continuous improvements in AI’s ability to discern and evaluate the quality of content accurately. This includes enhancing AI’s understanding of context, historical facts, and the nuances of human knowledge to prevent the surfacing of misleading or incorrect information.

Potential challenges in AI Watermarking

Integrating watermarks into AI-generated content has emerged as a crucial strategy. This approach aims to provide clear indicators to users and search engines regarding the origins and production methods of digital content. However, implementing such a strategy demands a careful balance. The quality of the watermark, its robustness against tampering, and its detectability by humans and machines are all critical factors that must be meticulously managed.

A significant challenge in this domain, which also poses a considerable risk, is the dynamic nature of AI development. This is particularly evident in the trend towards utilizing synthetic data to train AI models. Recent research has shed light on a phenomenon known as Model Autophagy Disorder (MAD). MAD describes a cycle where an over-reliance on synthetic data, without incorporating sufficient real-world data, leads to a gradual decline in the quality and diversity of generative models. This issue underscores the complex interplay in AI content creation and raises important considerations for developing effective watermarking strategies.

In response to these challenges, there is a growing consensus on addressing these issues at the metadata level. One promising approach is introducing a new property within the Schema.org framework. This property would provide detailed information about the type of data utilized for content generation and the content generation process itself. This strategy aims to foster trust and credibility in AI-generated content by enhancing transparency and mitigating risks associated with synthetic data.

WordLift, operating at the intersection of AI and content creation, recognizes the significance of these developments. As a pioneer in the use of semantic technologies and AI to enhance digital content, WordLift is positioned to contribute to the discourse on watermarking AI-generated content. WordLift plays a pivotal role in shaping the future of ethical and transparent AI content creation by advocating for the adoption of advanced metadata strategies and supporting the integration of transparent content. Through its expertise in semantic web technologies and AI, WordLift is committed to promoting best practices that ensure the integrity and trustworthiness of digital content in the age of artificial intelligence.

Wrapping up

The rapid popularity of AI-generated content has created a pressing need for effective tools to safeguard intellectual property, verify authorship, and maintain the integrity of digital assets. Despite some hurdles in developing foolproof watermarking techniques, the benefits of AI watermarking can’t be overlooked.

These include:

- Enhanced traceability of content to its source

- Deterring unauthorized use

- Plagiarism checking

It’s likely that, as AI continues to evolve, so too will the methods to protect and manage its outputs. AI watermarking methods will only become even more robust and secure.

The post AI Content Protection: Understanding Watermarking Essentials appeared first on WordLift Blog.